The picks from all the speakers in our Best of 2024 series catches you up for 2024, but since we wrote about running Paper Clubs, we’ve been asked many times for a reading list to recommend for those starting from scratch at work or with friends. We started with the 2023 a16z Canon, but it needs a 2025 update and a practical focus.

Here we curate “required reads” for the AI engineer. Our design goals are:

- pick ~50 papers (~one per week for a year), optional extras. Arbitrary constraint.

- tell you why this paper matters instead of just name drop without helpful context

- be very practical for the AI Engineer; no time wasted on Attention is All You Need, bc 1) everyone else already starts there, 2) most won’t really need it at work

We ended up picking 5 “papers” per section for:

- Section 1: Frontier LLMs

- Section 2: Benchmarks and Evals

- Section 3: Prompting, ICL & Chain of Thought

- Section 4: Retrieval Augmented Generation

- Section 5: Agents

- Section 6: Code Generation

- Section 7: Vision

- Section 8: Voice

- Section 9: Image/Video Diffusion

- Section 10: Finetuning

Section 1: Frontier LLMs

- GPT1, GPT2, GPT3, Codex, InstructGPT, GPT4 papers. Self explanatory. GPT3.5, 4o, o1, and o3 tended to have launch events and system cards instead.

- Claude 3 and Gemini 1 papers to understand the competition. Latest iterations are Claude 3.5 Sonnet and Gemini 2.0 Flash/Flash Thinking. Also Gemma 2.

- LLaMA 1, Llama 2, Llama 3 papers to understand the leading open models. You can also view Mistral 7B, Mixtral and Pixtral as a branch on the Llama family tree.

- DeepSeek V1, Coder, MoE, V2, V3 papers. Leading (relatively) open model lab.

- Apple Intelligence paper. It’s on every Mac and iPhone.

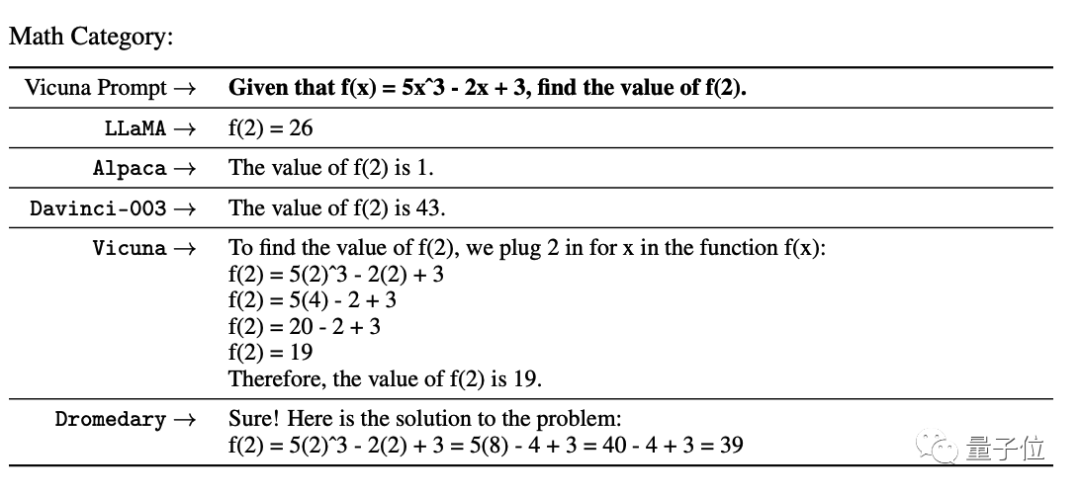

Honorable mentions: AI2 (Olmo, Molmo, OlmOE, Tülu 3, Olmo 2), Grok, Amazon Nova, Yi, Reka, Jamba, Cohere, Nemotron, Microsoft Phi, HuggingFace SmolLM – mostly lower in ranking or lack papers. Alpaca and Vicuna are historical interest, while Mamba 1/2 and RWKV are potential future interest. If time allows, we recommend the Scaling Law literature: Kaplan, Chinchilla, Emergence / Mirage, Post-Chinchilla laws.

Section 2: Benchmarks and Evals

- MMLU paper – the main knowledge benchmark, next to GPQA and BIG-Bench. In 2025 frontier labs use MMLU Pro, GPQA Diamond, and BIG-Bench Hard.

- MuSR paper – evaluating long context, next to LongBench, BABILong, and RULER. Solving Lost in The Middle and other issues with Needle in a Haystack.

- MATH paper – a compilation of math competition problems. Frontier labs focus subsets of MATH: MATH level 5, AIME, FrontierMath, AMC10/AMC12.

- IFEval paper – the leading instruction following eval and only external benchmark adopted by Apple. You could also view MT-Bench as a form of IF.

- ARC AGI challenge – a famous abstract reasoning “IQ test” benchmark that has lasted far longer than many quickly saturated benchmarks.

We covered many of these in Benchmarks 101 and Benchmarks 201, while our Carlini, LMArena, and Braintrust episodes covered private, arena, and product evals (read Hamel on LLM-as-Judge). Benchmarks are closely linked to Datasets.

Section 3: Prompting, ICL & Chain of Thought

Note: The GPT3 paper (“Language Models are Few-Shot Learners”) should already have introduced In-Context Learning (ICL) – a close cousin of prompting. We also consider prompt injections required knowledge — Lilian Weng, Simon W.

- The Prompt Report paper – a survey of prompting papers (podcast).

- Chain-of-Thought paper – one of multiple claimants to popularizing Chain of Thought, along with Scratchpads and Let’s Think Step By Step

- Tree of Thought paper – introducing lookaheads and backtracking (podcast)

- Prompt Tuning paper – you may not need prompts – if you can do Prefix-Tuning, adjust decoding (say via entropy), or representation engineering

- Automatic Prompt Engineering paper – it is increasingly obvious that humans are terrible zero-shot prompters and prompting itself can be enhanced by LLMs. The most notable implementation of this is in the DSPy paper/framework.

Section 3 is one area where reading disparate papers may not be as useful as having more practical guides – we recommend Lilian Weng, Eugene Yan, and Anthropic’s Prompt Engineering Tutorial and AI Engineer Workshop.

Section 4: Retrieval Augmented Generation

- Introduction to Information Retrieval – a bit unfair to recommend a book, but we are trying to make the point that RAG is an IR problem and IR has a 60 year history that includes TF-IDF, BM25, FAISS, HNSW and other “boring” techniques.

- 2020 Meta RAG paper – which coined the term. The original authors have started Contextual and have coined RAG 2.0. Modern “table stakes” for RAG — HyDE, chunking, rerankers, multimodal data are better presented elsewhere.

- MTEB: Massive Text Embedding Benchmark paper – the de-facto leader, with known issues. Many embeddings have papers – pick your poison – OpenAI, Nomic Embed, Jina v3, cde-small-v1 – with Matryoshka embeddings increasingly standard.

- GraphRAG paper – Microsoft’s take on adding knowledge graphs to RAG, now open sourced. One of the most popular trends in RAG in 2024, alongside of ColBERT/ColPali/ColQwen (more in the Vision section).

- RAGAS paper – the simple RAG eval recommended by OpenAI. See also Nvidia FACTS framework and Extrinsic Hallucinations in LLMs – Lilian Weng’s survey of causes/evals for hallucinations.

RAG is the bread and butter of AI Engineering at work in 2024, so there are a LOT of industry resources and practical experience you will be expected to have. LlamaIndex (course) and LangChain (video) have perhaps invested the most in educational resources. You should also be familiar with the perennial RAG vs Long Context debate.

Section 5: Agents

- SWE-Bench paper (our podcast) – after adoption by Anthropic, Devin and OpenAI, probably the highest profile agent benchmark today (vs WebArena or SWE-Gym). Technically a coding benchmark, but more a test of agents than raw LLMs. See also SWE-Agent, SWE-Bench Multimodal and the Konwinski Prize.

- ReAct paper (our podcast) – ReAct started a long line of research on tool using and function calling LLMs, including Gorilla and the BFCL Leaderboard. Of historical interest – Toolformer and HuggingGPT.

- MemGPT paper – one of many notable approaches to emulating long running agent memory, adopted by ChatGPT and LangGraph. Versions of these are reinvented in every agent system from MetaGPT to AutoGen to Smallville.

- Voyager paper – Nvidia’s take on 3 cognitive architecture components (curriculum, skill library, sandbox) to improve performance. More abstractly, skill library/curriculum can be abstracted as a form of Agent Workflow Memory.

- Anthropic on Building Effective Agents – just a great end-2024 recap that focuses on the importance of chaining, routing, parallelization, orchestration, evaluation, and optimization. See also OpenAI Swarm.

We covered many of the 2024 SOTA agent designs at NeurIPS. Note that we skipped bikeshedding agent definitions, but if you really need one, you could use mine.

Section 6: Code Generation

- The Stack paper – the original open dataset twin of The Pile focused on code, starting a great lineage of open codegen work from The Stack v2 to StarCoder.

- Open Code Model papers – choose from DeepSeek-Coder, Qwen2.5-Coder, or CodeLlama. Many regard 3.5 Sonnet as the best code model but it has no paper.

- HumanEval/Codex paper – This is a saturated benchmark, but is required knowledge for the code domain. SWE-Bench is more famous for coding now, but is expensive/evals agents rather than models. Modern replacements include Aider, Codeforces, BigCodeBench, LiveCodeBench and SciCode.

- AlphaCodeium paper – Google published AlphaCode and AlphaCode2 which did very well on programming problems, but here is one way Flow Engineering can add a lot more performance to any given base model.

- CriticGPT paper – LLMs are known to generate code that can have security issues. OpenAI trained CriticGPT to spot them, and Anthropic uses SAEs to identify LLM features that cause this, but it is a problem you should be aware of.

CodeGen is another field where much of the frontier has moved from research to industry and practical engineering advice on codegen and code agents like Devin are only found in industry blogposts and talks rather than research papers.

Section 7: Vision

- Non-LLM Vision work is still important: e.g. the YOLO paper (now up to v11), but increasingly transformers like DETRs Beat YOLOs too.

- CLIP paper – the first successful ViT from Alec Radford. These days, superceded by BLIP/BLIP2 or SigLIP/PaliGemma, but still required to know.

- MMVP benchmark (LS Live)- quantifies important issues with CLIP. Multimodal versions of MMLU (MMMU) and SWE-Bench do exist.

- Segment Anything Model and SAM 2 paper (our pod) – the very successful image and video segmentation foundation model. Pair with GroundingDINO.

- Early fusion research: Contra the cheap “late fusion” work like LLaVA (our pod), early fusion covers Meta’s Flamingo, Chameleon, Apple’s AIMv2, Reka Core, et al. In reality there are at least 4 streams of visual LM work.

Much frontier VLM work these days is no longer published (the last we really got was GPT4V system card and derivative papers). We recommend having working experience with vision capabilities of 4o (including finetuning 4o vision), Claude 3.5 Sonnet/Haiku, Gemini 2.0 Flash, and o1. Others: Pixtral, Llama 3.2, Moondream, QVQ.

Section 8: Voice

- Whisper paper – the successful ASR model from Alec Radford. Whisper v2, v3 and distil-whisper and v3 Turbo are open weights but have no paper.

- AudioPaLM paper – our last look at Google’s voice thoughts before PaLM became Gemini. See also: Meta’s Llama 3 explorations into speech.

- NaturalSpeech paper – one of a few leading TTS approaches. Recently v3.

- Kyutai Moshi paper – an impressive full-duplex speech-text open weights model with high profile demo. See also Hume OCTAVE.

- OpenAI Realtime API: The Missing Manual – Again, frontier omnimodel work is not published, but we did our best to document the Realtime API.

We do recommend diversifying from the big labs here for now – try Daily, Livekit, Vapi, Assembly, Deepgram, Fireworks, Cartesia, Elevenlabs etc. See the State of Voice 2024. While NotebookLM’s voice model is not public, we got the deepest description of the modeling process that we know of.

With Gemini 2.0 also being natively voice and vision multimodal, the Voice and Vision modalities are on a clear path to merging in 2025 and beyond.

Section 9: Image/Video Diffusion

- Latent Diffusion paper – effectively the Stable Diffusion paper. See also SD2, SDXL, SD3 papers. These days the team is working on BFL Flux [schnell|dev|pro].

- DALL-E / DALL-E-2 / DALL-E-3 paper – OpenAI’s image generation.

- Imagen / Imagen 2 / Imagen 3 paper – Google’s image gen. See also Ideogram.

- Consistency Models paper – this distillation work with LCMs spawned the quick draw viral moment of Dec 2023. These days, updated with sCMs.

- Sora blogpost – text to video – no paper of course beyond the DiT paper (same authors), but still the most significant launch of the year, with many open weights competitors like OpenSora. Lilian Weng survey here.

We also highly recommend familiarity with ComfyUI (upcoming episode). Text Diffusion, Music Diffusion, and autoregressive image generation are niche but rising.

Section 10: Finetuning

- LoRA/QLoRA paper – the de facto way to finetune models cheaply, whether on local models or with 4o (confirmed on pod). FSDP+QLoRA is educational.

- DPO paper – the popular, if slightly inferior, alternative to PPO, now supported by OpenAI as Preference Finetuning.

- ReFT paper – instead of finetuning a few layers, focus on features instead.

- Orca 3/AgentInstruct paper – see the Synthetic Data picks at NeurIPS but this is a great way to get finetue data.

- RL/Reasoning Tuning papers – RL Finetuning for o1 is debated, but Let’s Verify Step By Step and Noam Brown’s many public talks give hints for how it works.

We recommend going thru the Unsloth notebooks for finetuning open models. This is obviously an endlessly deep rabbit hole that, at the extreme, overlaps with the Research Scientist track.

© 版权声明

文章版权归作者所有,未经允许请勿转载。

相关文章

暂无评论...